Helping to revive wargaming in the Royal Navy – an update: Games in a COVID world

22/04/2021

Lieutenant Commander Ed Oates, recent PhD graduate at Cranfield Defence and Security and helicopter simulator instructor at RNAS Culdrose in Cornwall, previously shared his experiences in using knowledge learnt at Cranfield to drive a wargaming revival in the Royal Navy. Dr Oates now provides an update on using wargames during the COVID pandemic:

I’ve had a lot of fun with games at work and I’m pleased to say that over the last year we’ve just about been able to keep games going as a training tool while using vinyl gloves, face masks and visors to reduce risks of COVID transmission. It’s not been easy and I wondered what can be passed on about ‘games in a COVID world’. The feedback from the students and from their parent units/commands have been positive as well. Games can give a route to learning and retention of knowledge, skills and attitudes, and when underpinned by the Defence Systems Approach to Training (JSP 822), they form targeted media within a coherent course of training objectives.

There’s no doubt in my mind that the human-to-human interaction of playing a game opposite your opponent is a powerful element of the learning process. There’s a ‘UK Fight Club’ blog on Defence connect that promotes the use of games in the military and organises online lectures where others have expressed similar findings. Classroom lessons have presented the process for organising a Section Attack for instance, then the process has been walked through over real terrain, and then implemented in a Closed Wargame: Red Cell vs Blue Cell. It’s only in the Wargame that students have changed their plans as a direct response to the fact that they now have a thinking opponent.

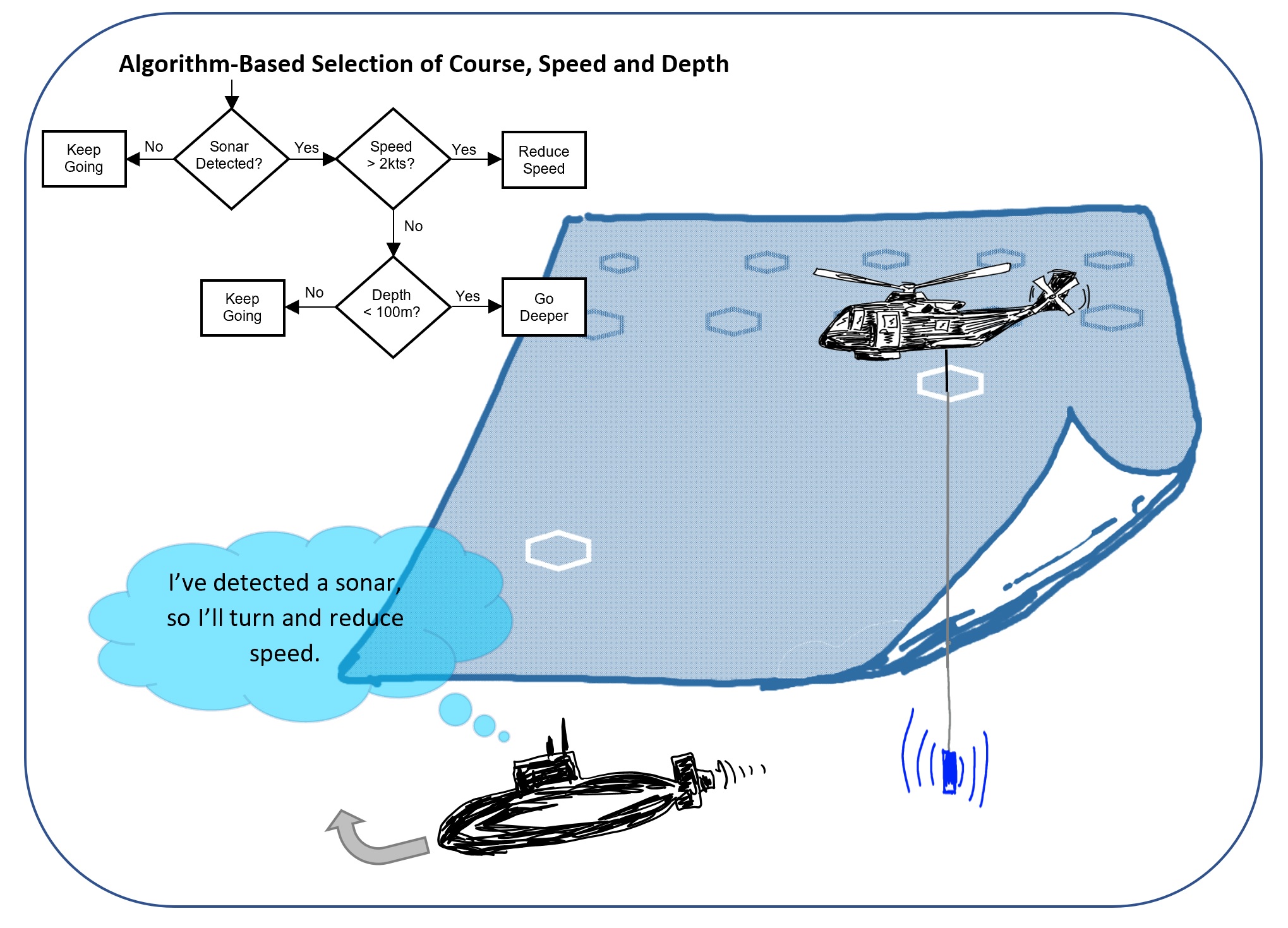

This translates well in a multi-player computer game where the human opposition is still there, though not eye-ball to eye-ball. How does this work in a single-player computer game like the Submarine Hunting Game in the featured image above?

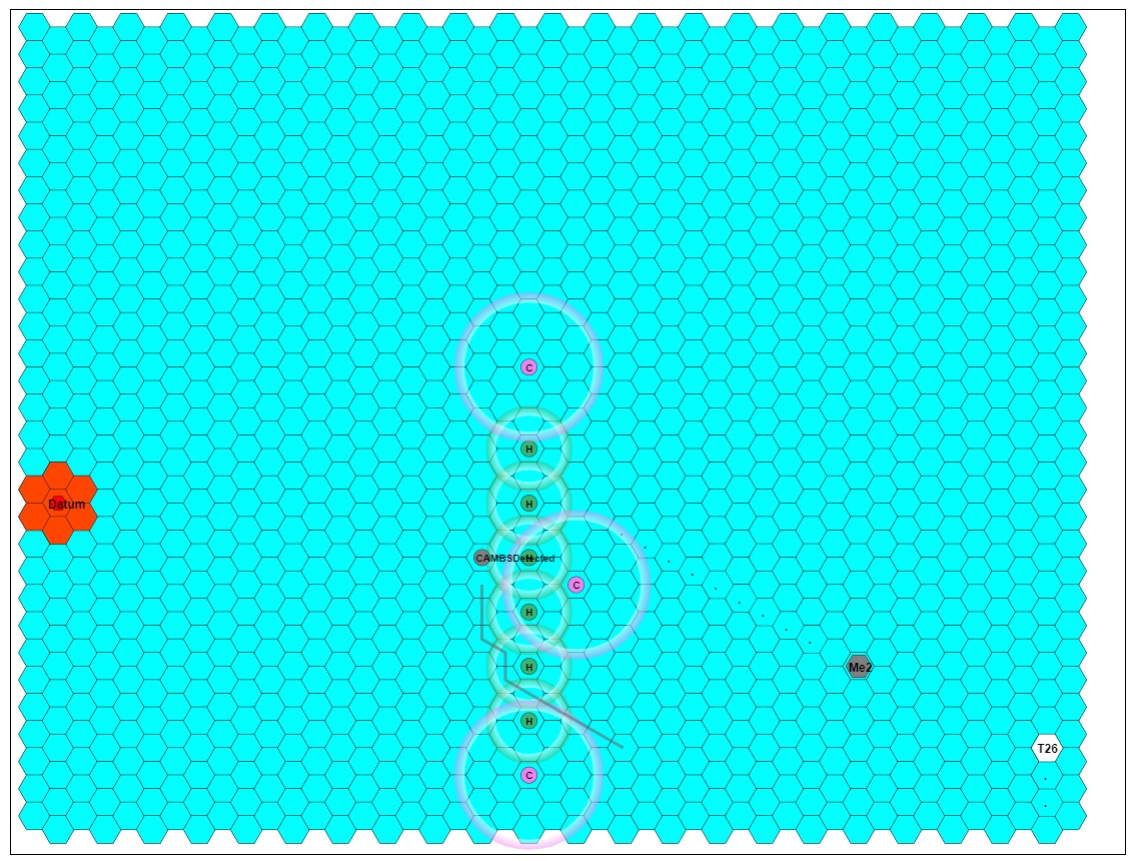

From the games that I’ve built to run in the MoDNET (MOD intranet) web browser, I’ve taken two routes so far: Random Selection, and Algorithmic Selection.

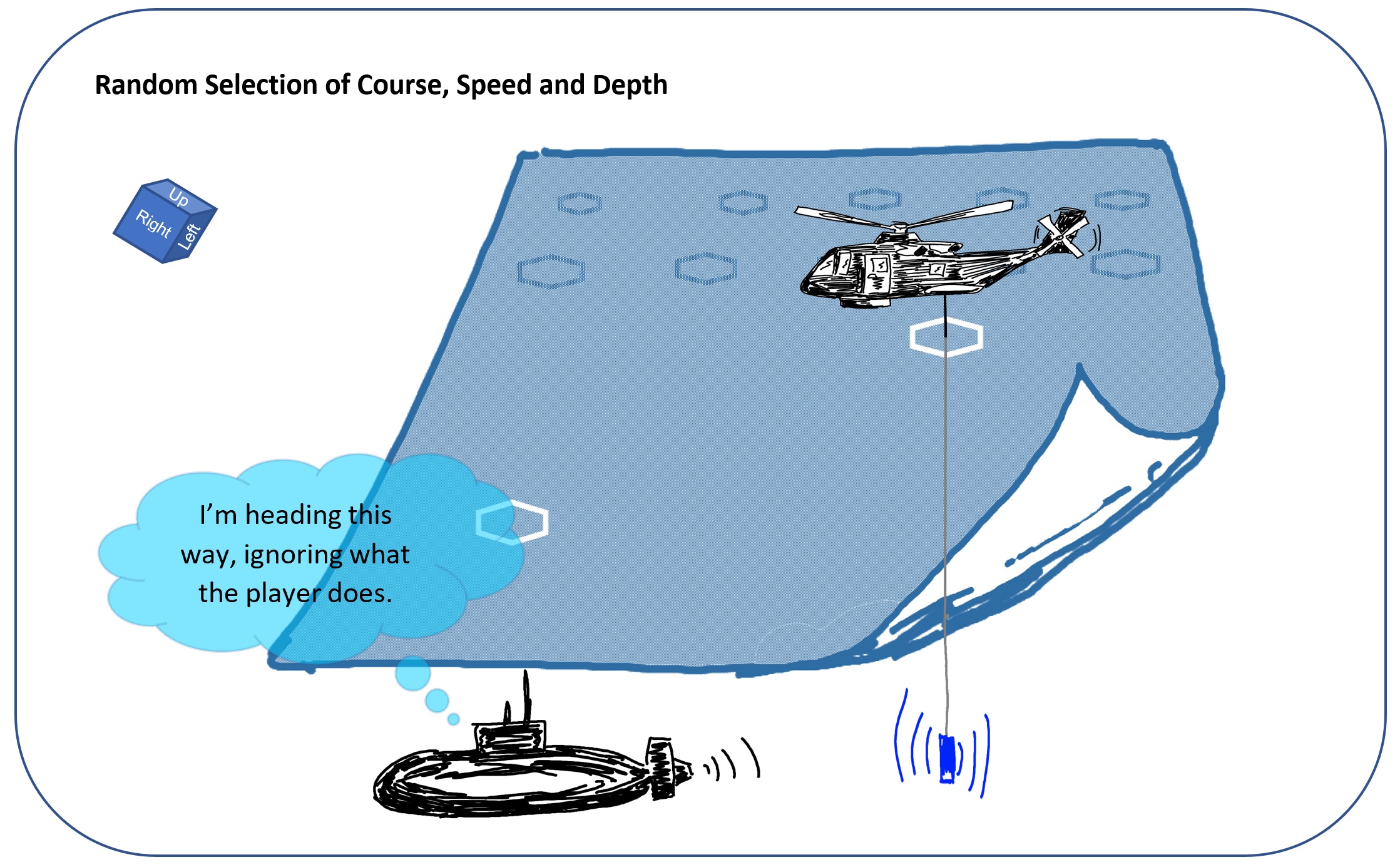

The first just takes a range of plausible actions (positions, headings, and speeds) and the learning takes place around the scenario where the opposition is free to roam following their randomly selected pre-set routes and actions. Varying levels of difficulty are built into the game levels to provide increasing challenge for the students and the opposition’s ‘randomness’ changes as part of this challenge.

The other uses a pre-programmed algorithm of ‘Actions On’ for the computer-controlled opposition.

This gives a range of responses based on the way that the student approaches the military task but will always be seen to follow a similar pattern if played long enough. There’s still a bit of randomness built in for the initial setting but after that it’s the students themselves that affect the way the opposition reacts. If the student leaves a gap, the opposition will find it and exploit it.

What I haven’t tried so far is to use machine learning as the basis for controlling the opposition. This would take control of the opposition away from the game-maker. I would code the input sources, those intelligence sources or significant facts, then after that the opposition would build its own picture of the playing surface and consult its own long-term ‘memory’ from a growing number of games played.

This would need to have the ability to write-back to the server to build the long-term memory, so there’s one technical hurdle to overcome, but would it have the same feeling as a human-vs-human encounter? I’ve had feedback on the Algorithmic opposition, and that does raise the heart rate and increase thinking in the first few games. I wonder if the Machine Learning opposition would provide a longer-term learning experience? Please comment with your thoughts below.

Categories & Tags:

Leave a comment on this post:

You might also like…

Building more than research: Reflections from the ECRn Symposium 2026

There’s something quietly powerful about a room full of early career researchers. Not just the ideas, although there were plenty of those—but the conversations, the curiosity, and the sense that everyone is figuring things ...

Library services over Easter, 3-6 April

Kings Norton Library will remain open for study 24/7. You will need your University ID card to enter the building and can use the self-service machines to borrow and return items as usual. Barrington Library ...

How do I access the full-text of Harvard Business Review (HBR)?

This is a frequently asked question, and it's worth knowing how to access this key management journal. So, how do you access HBR in full-text? The short answer is via our eJournals finder. You can find ...

Engineering problem to solve? Let Knovel help you find a solution

Did you know that Knovel provides you with more than just eBooks? Knovel is a key database for many engineering, mechanical and materials courses here at Cranfield University, and contains content from an extensive range ...

What happens when female scholars meet influential leaders?

On the 5 March 2026, our British Council Women in STEM Scholars had the privilege of sitting down with two excellent role models of industry and academia: Professor Dame Karen Holford, ...

From MSc to CEO: Igniting a research revolution

For many, a master’s degree is achieving a big milestone. Kilyan Ocampo, Computational Fluid Dynamics alumni shares how studying at Cranfield helped launch his career in the energy sector. Today, Kilyan ...