Anonymising your research data

17/03/2017

In our last RDM blog post we discussed data protection in general; now let’s take a further look at anonymisation. The main message is to think about it early, planning well to ensure that data is safe, secure, and handled in line with the Data Protection Act and any funder expectations. Here are a few questions to ask yourself…

Is your consent right? Of course, one aspect is making sure your consent form covers which data will be shared and which destroyed; you must be careful with the wording. “Anonymised” data means it is impossible to identify an individual from that dataset, or from that dataset in conjunction with other data available. It’s not easy, so we must be careful about terms such as “anonymous” or “de-identified” and making guarantees. The ICPSR recommendations suggest stating exactly which data elements will be removed before sharing.

How will you collect and store personal data? Make sure you’re only collecting what is absolutely necessary, and that only authorised users have access to it. This may mean storing it on a device with a login (e.g. on your University network drive). Remember that if you make a backup on an external hard drive, for example, that device should also have a login.

If you’re collecting any sensitive personal data, you must also use encryption, in line with ICO recommendations; contact the IT Service Desk for installation and instructions on our encryption software. The definition of “sensitive personal data” is personal data relating to someone’s race/ethnicity, political or religious beliefs, trade union membership, physical/mental health, sexual life, or offences (committed or alleged).

Personal data, especially sensitive data, should not be stored on unsecured devices – mistakes can harm individuals and also be expensive (a police force was fined £120,000 for saving personal data on a memory stick which was then stolen!).

Which data needs sharing? Does your research have any funding from bodies that require you to preserve/share your data at the end of the project? For example, RCUK councils expect data to be preserved for usually 10 years since their last use, and shared as openly as possible, with any restrictions outlined in the early stages of the project and fully justified. Often, personal data can be shared as long as it is sufficiently anonymised; the UK Data Archive (usually the repository for ESRC projects) also offers a Secure Lab option, for controlled access to data that is not able to be shared openly.

Are you deleting data securely? As soon as research no longer requires the identifiable portions of data, these should be removed and future research should use de-identified or anonymised data. The version containing personal information (the key), may either need destroying, or retaining securely. If you are destroying it, contact the IT Service Desk for secure erasure, as just deleting a file will not prevent it being accessible on a device. Whilst the responsibility to ensure deletion of personal research data ultimately lies with the head of department, the nominated data manager for the project may be assigned the task of arranging deletion, depending on responsibilities laid out in your data management plan.

What anonymisation steps will you take? The UK Anonymisation Network has an excellent resource if you’re new to anonymisation: the anonymisation decision-making framework (pdf). You’ll probably need to think about removing direct identifiers (name, address, photos…) as well as removing/editing indirect identifiers, which can identify individuals when combined with other data (e.g. occupation and workplace). Some methods are:

-

Reducing precision: e.g. instead of birth dates, just give birth years.

-

Aggregating data: e.g. instead of job titles, use occupational categories (the ONS have a Standard Occupational Classification which can help); or instead of city, use geographical area.

-

Hide outliers: e.g. use income or age bands that do not highlight highest or lowest values, as these exceptional values can often identify individuals.

Audio-visual anonymisation is harder, especially if trying to automate it, as Google demonstrated recently when blurring out a cow’s face! It is preferable to obtain informed consent for reuse of audio-visual material, than to choose the route of blurring images or altering audio. However, you also need to be clear on how long the data will be kept for, and the process for withdrawing consent for the data to be reused.

Finally, is it worth anonymising your data? Let’s not forget that sometimes it isn’t! If the anonymisation work would be expensive, time-consuming, and greatly reduce the usefulness of the data, it is probably not worth doing. You would then need to consider whether the unanonymised version can be retained or reused under stricter access conditions. The UKAN framework compares data security to house security: if you make a fully secure house, with no doors or windows, its usefulness is reduced to nothing – there is always a balance to be found.

Public domain image from unsplash.com

Categories & Tags:

Leave a comment on this post:

You might also like…

Keren Tuv: My Cranfield experience studying Renewable Energy

Hello, my name is Keren, I am from London, UK, and I am studying Renewable Energy MSc. My journey to discovering Cranfield University began when I first decided to return to academia to pursue ...

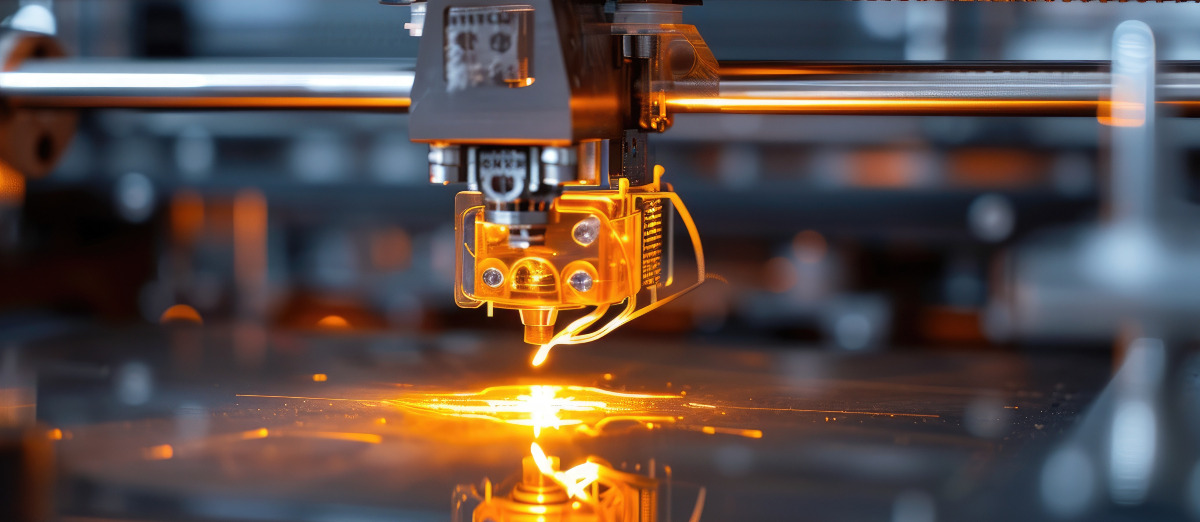

3D Metal Manufacturing in space: A look into the future

David Rico Sierra, Research Fellow in Additive Manufacturing, was recently involved in an exciting project to manufacture parts using 3D printers in space. Here he reflects on his time working with Airbus in Toulouse… ...

A Legacy of Courage: From India to Britain, Three Generations Find Their Home

My story begins with my grandfather, who plucked up the courage to travel aboard at the age of 22 and start a new life in the UK. I don’t think he would have thought that ...

Cranfield to JLR: mastering mechatronics for a dream career

My name is Jerin Tom, and in 2023 I graduated from Cranfield with an MSc in Automotive Mechatronics. Originally from India, I've always been fascinated by the world of automobiles. Why Cranfield and the ...

Bringing the vision of advanced air mobility closer to reality

Experts at Cranfield University led by Professor Antonios Tsourdos, Head of the Autonomous and Cyber-Physical Systems Centre, are part of the Air Mobility Ecosystem Consortium (AMEC), which aims to demonstrate the commercial and operational ...

Using grey literature in your research: A short guide

As you research and write your thesis, you might come across, or be looking for, ‘grey literature’. This is quite simply material that is either unpublished, or published but not in a commercial form. Types ...