New Operating Models – do they deliver the claimed benefits? (Or, never place rocks next to hard places!)

27/09/2017

On our travels, we often see organisations declaring they’re using new operating models (or they’re about to). Someone, somewhere will have done some calculations, simulations or forecasting and arrived at the conclusion “we need to re-organise” or “centralise” or “decentralise”. Interestingly, these new operating models are often introduced by newly into position senior managers and executives, sometimes with the advice of consultants (“it worked in ‘X’ number of previous management roles / assignments, so it will work here”), looking to make their mark.

A benefits case is constructed, sometimes with an already foregone conclusion based on it working in the past – and a team of analysts and some fancy software will crunch some data and out comes the desired answer!

There are one or two problems with this approach! For example:

- Because an operating model worked at some point in time, with one organisation in the context of the services they were offering in their respective market or sector, it does not mean that model will work as well at a different time and out of context. It’s always tempting to copy what looks like a good idea in one situation, and then find in one’s own context it can be less optimal than present – in some cases disastrous. Common use of Best Practice is an example – see previous blog https://cranfieldcbp.wordpress.com/2017/06/12/the-use-of-best-practice-the-well-trodden-path-to-mediocrity/

- Because many analytic tools are based on spreadsheets and macros, there is a tendency to extract a slug of data and analyse it out of context – e.g.:

o the business may be strongly seasonal – so any extract of data shorter that at least 2 years (and preferably more) may yield deceptive results

o the business may be strongly cyclic on a daily or weekly basis, so that using averages (particularly when applying to resourcing) can yield deceptive/inaccurate results

o all businesses experience variation (in control as well as out of control), and most spreadsheet applications do not properly take this into account – see previous blog https://cranfieldcbp.wordpress.com/2017/07/10/forecasting-prediction-is-very-difficult-especially-if-its-about-the-future/

o all businesses will be processing waste- and failure-demand as well as value-demand, so unless the data being analysed considers the whole end-to-end system (i.e. is Systems-thinking), the results could be just processing waste better (rather than removing it)

o with the above, some organisations propose new models based on “efficiency reviews”, not looking at the whole system and certainly not the outcomes and customer experience

o and a final (but not exhaustive) point is that most of the resultant reorganisations fracture long established person-to-person links that made the business processes work – these have to be rebuilt after each reorganisation

We’ll quote just two examples (out of a pool of many we have encountered) to make the point:

- In a UK-based global telecommunications company the business comprised a Planning Function and a Delivery Function. A US-based global consulting firm came in and broke the process into two separate functional areas and in Planning, outsourced the survey work, all based on productivity (efficiency). On paper, the claimed benefits would be an annual saving in Planning of £10m at least. The end result was massive costs moved into the Delivery function driven largely by “Waste and Failure demand” and abhorrent customer service. One end result was that company narrowly escaping break-up by Ofcom because of absurdly long over-runs in delivery of broadband capability.

- In several public sector organisations, we see flip-flopping between centrally organised functional structures and decentralised “push the expertise out to the front-line” structures – with flip-flopping occurring every 5 – 10 years or so (in the private sector we see this happen more frequently, usually with a change in senior management). It’s difficult to establish the waste generated or the negative impact on customer service!

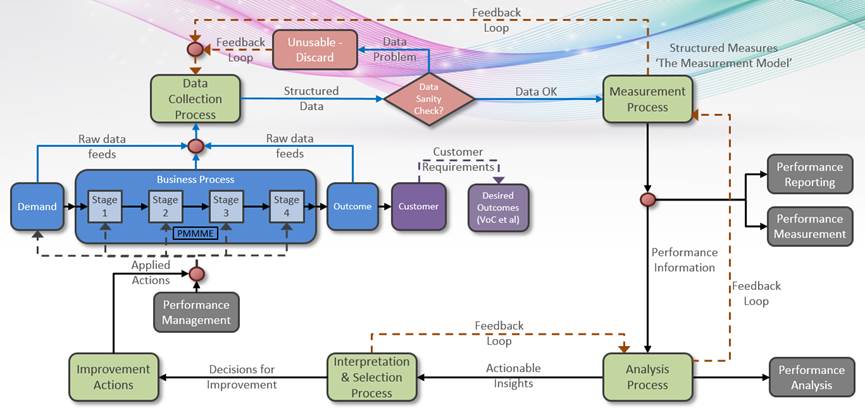

Seems to me that the only way to establish if a new operating model is actually delivering is to ensure we’re measuring the right things in the right way before and after deployment of the model (preferably, if possible, after a partial new model deployment) looking for data-driven evidence that the KPIs to be improved upon are actually statistically showing sustained improvement. For this, you have to have a “closed-loop” control system (unfortunately Matthew Syed in Black Box Thinking uses the term open loop to mean the same thing) where the system (not just the part where the operating model has changed) is monitored continuously prior to, during and after application of the new model. See example below where the business process (in blue) delivers the (hopefully) desired outcomes and the Applied Action in this case is the new operating model:

Without this, when someone asks if the new model is working – well, like Dilbert, you may be stuck between a rock and a hard place!

David Anker

Categories & Tags:

Leave a comment on this post:

You might also like…

From classroom to reality: Supply chain insights from Cranfield’s Manchester study tour

Each year, Cranfield University organises a study tour for MSc Logistics and Procurement & Supply Chain Management students. For the 2025–2026 cohort, students were given the option to select one of three study groups: ...

Systematic literature review – Managing duplicates

One of the questions which often comes up when discussing the SLR process is how do I manage my references in the most efficient way during the process of going from my search results to ...

Liverpool study tour: Connecting classroom learning with industry practice

From 21 to 24 April 2026, the MSc Logistics and Supply Chain Management cohort at Cranfield University took part in a valuable Liverpool Study Tour. The visit was a strong example of our close ...

From wave tank to ocean: seeing my work come to life in Indonesia

Gili Ketapang is a small island in East Java, Indonesia. Around 2% of the population of Indonesia lives without access to electricity but the InnovateUK-funded Solar2Wave project aims to make sure 100% of the ...

Accessing EBSCO eBooks offline from 19 May

From 19 May you will need to use the Thorium Reader app to download and read full EBSCO eBooks offline. This will not affect the way you read these eBooks online (via your browser) or ...

Bank holiday hours for Library Services: Monday 25 May

Library Services staff will be taking a break on Monday 25 May for the second May bank holiday. You will still be able to access all the online resources and help you need via our library ...